Greetings inside the regular weekly cybersecurity news round-up, covering the articles published between November 23 and 29 of the year 2020!

Greetings inside the regular weekly cybersecurity news round-up, covering the articles published between November 23 and 29 of the year 2020!

Hello and welcome to the weekend's usual weekly cybersecurity news round-up, covering the articles published between November 16 and 22, 2020.

Greetings! As usual on Sundays, this is a weekly cybersecurity news round-up, covering the articles published between November 9 and 15, 2020.

This is a transcription of a talk that was presented at CSNOG 2020 — video is at the end of the page

Greetings! My name is Alexander Zubkov. I work at Qrator Labs, where we protect our customers against DDoS attacks and provide BGP analytics.

We started using Mellanox switches around 2 or 3 years ago. At the time we got acquainted with Switchdev in Linux and today I want to share with you our experience.

Hello and welcome back to our weekly news recap! This time we are interested in the most exciting articles and papers published in two weeks - between October 26 and November 8, 2020.

Greetings within weekly news round-up! This Sunday, we are again looking at the relevant articles and researches published between October 19 and 25, 2020.

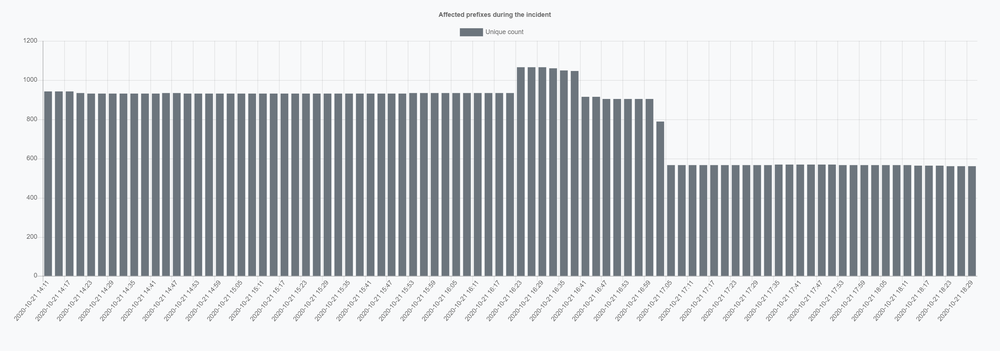

AS203, belonging to what was formerly known as "Level3", acquired by "CenturyLink" in 2016, latter rebranded as "Lumen" in 2020, is a frequent visitor within the incident reports of the Qrator.Radar team. We are not here to blame anyone, but such occurrence of routing incidents for a single organization is worrying - we hope this article would help you to understand how even a small event could reach enormous impact with specific prerequisites met.

Welcome to the regular networking and cybersecurity newsletter. Let's take a look at the most interesting articles published between October 12 and 18, 2020.

Hello and welcome to the regular networking and cybersecurity newsletter! Relevant articles published between October 5 and October 11, 2020, are following.

Welcome to the regular networking and cybersecurity newsletter, brought to you by Qrator Labs!

This time we are interested in the most interesting materials published between September 28 and October 4, 2020.