As we wrote in the 2018-2019 Interconnected Networks Issues and Availability Report at the beginning of this year, TLS 1.3 arrival is inevitable. Some time ago we successfully deployed the 1.3 version of the Transport Layer Security protocol. After gathering and analyzing the data, we are now ready to highlight the most exciting parts of this transition.

As IETF TLS Working Group Chairs wrote in the article:

“In short, TLS 1.3 is poised to provide a foundation for a more secure and efficient Internet over the next 20 years and beyond.”

TLS 1.3 has arrived after 10 years of development. Qrator Labs, as well as the IT industry overall, watched the development process closely from the initial draft through each of the 28 versions while a balanced and manageable protocol was maturing that we are ready to support in 2019. The support is already evident among the market, and we want to keep pace in implementing this robust, proven security protocol.

Eric Rescorla, the lone author of TLS 1.3 and the Firefox CTO, told The Register that:

“It's a drop-in replacement for TLS 1.2, uses the same keys and certificates, and clients and servers can automatically negotiate TLS 1.3 when they both support it,” he said. “There's pretty good library support already, and Chrome and Firefox both have TLS 1.3 on by default.”

There is a list of current implementations of the TLS 1.3 on GitHub available for everyone looking at the best fitting library: https://github.com/tlswg/tls13-spec/wiki/Implementations. It is clear that the adoption of the upgraded protocol would be — and already is — going fast and widespread since everybody now understands how fundamental encryption is in the modern world.

What has changed compared to TLS 1.2?

From the Internet Society’s article:

“How is TLS 1.3 improving things?

TLS 1.3 offers some technical advantages such as a simplified handshake to establish secure connections, and allow clients to resume sessions with servers more quickly. That should reduce setup latency and the number of failed connections on weak links that are often used as a justification for maintaining HTTP-only connections.

Just as importantly, it also removes support for several outdated and insecure encryption and hashing algorithms that are currently permitted (although no longer recommended) to be used with earlier versions of TLS, including SHA-1, MD5, DES, 3DES, and AES-CBC, while adding support for newer cipher suites. Other enhancements include more encrypted elements of the handshake (e.g., the exchange of certificate information is now encrypted) to reduce hints to potential eavesdroppers, as well as improvements to forward secrecy when using particular key exchange modes so that communications at a given moment in time should remain secure even if the algorithms used to encrypt them are compromised in the future”.

DDoS and modern protocol development

As you may have heard already, there were significant tensions in the IETF TLS working group during the protocol development, and even after that. It is evident now that individual enterprises (including financial institutions) would have to change the way their network security is built to adapt to the now built-in perfect forward secrecy.

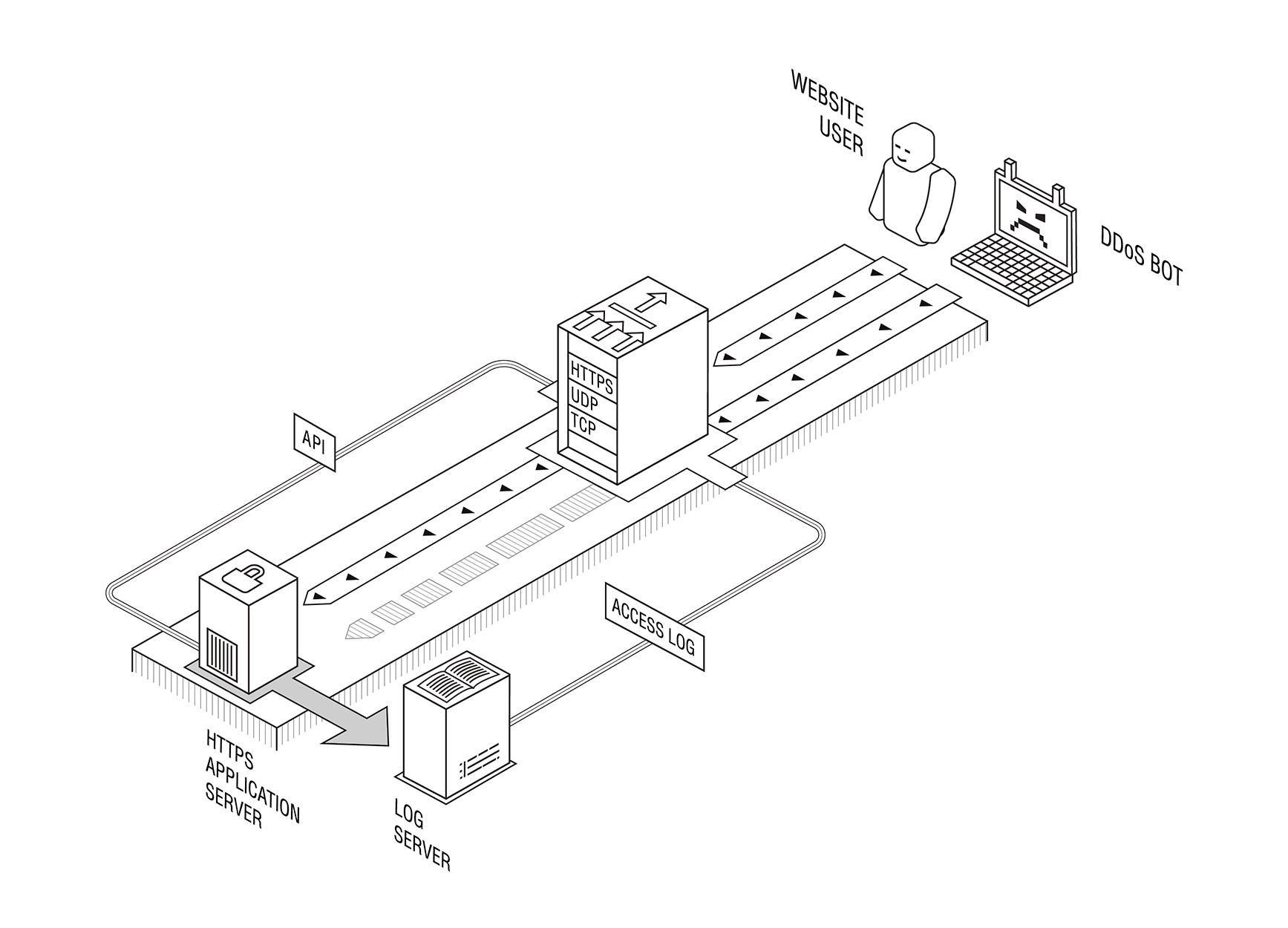

The reasons why this might be required are outlined in a document written by Steve Fenter. The 20 pages long draft mentions several use cases where an enterprise may want to do out-of-band traffic decryption (which PFS does not allow you to do), including fraud monitoring, regulatory requirements, and the Layer 7 DDoS protection.

While we definitely hold no right to speak of the regulatory requirements, our own Layer 7 DDoS mitigation product (including the solution which doesn’t require a customer to disclose any sensitive data to us) was built back in 2012 with PFS in mind from the start, and our customers needed no changes in their infrastructure after the server-side TLS version upgrade.

One concern though with regards to the development of the next generation protocol suites and features is that it usually depends heavily on academic research, and the state of that for the DDoS mitigation industry is quite poor. Section 4.4 of the IETF draft “QUIC manageability”, which is a part of the future QUIC protocol suite, could be seen as a perfect example of that: it states that “current practices in detection and mitigation of [DDoS attacks] generally involve passive measurement using network flow data”, the latter being in fact very rarely a case in real life enterprise environments (and only partly so for ISP setups) — and in any way hardly a “general case” in practice — but definitely a general case in academic research papers which, most of the time, aren’t backed by proper implementations and real-world testing against the whole range of potential DDoS attacks, including application layer ones (which, due to the progress in the worldwide TLS deployment, could obviously never be handled anymore with any passive measurement).

Likewise, we don’t know yet how DDoS mitigation hardware manufacturers would adapt to the TLS 1.3 reality. Due to the technical complexity of the out-of-band protocol support, it could take them some time.

Setting proper academic research trend is a challenge for the DDoS mitigation providers in 2019. One area where it could be done is the SMART research group within IRTF where researchers could collaborate with the industry to refine their problem area knowledge and the research directions. We would warmly welcome all the researchers who would want to reach us with questions or suggestions in that research group as well as via email: rnd@qrator.net.